https://blogs.msdn.microsoft.com/cloud_solution_architect/2017/07/29/building-blockchain-dapps-on-service-fabric/

Thursday, August 10, 2017

Building Blockchain DApp’s on Service Fabric

https://blogs.msdn.microsoft.com/cloud_solution_architect/2017/07/29/building-blockchain-dapps-on-service-fabric/

Tuesday, December 06, 2016

Blue/Green Deployments in Service Fabric

https://blogs.msdn.microsoft.com/cloud_solution_architect/2016/10/17/bluegreen-deployments-in-service-fabric/

Saturday, October 08, 2016

Distributed Tracing in Service Fabric using Application Insights

https://blogs.msdn.microsoft.com/cloud_solution_architect/2016/10/06/distributed-tracing-in-service-fabric-using-application-insights/

Friday, September 16, 2016

Trying out Docker for Azure (Beta)

https://blogs.msdn.microsoft.com/cloud_solution_architect/2016/09/16/trying-out-docker-for-azure-beta/

...

Saturday, July 02, 2016

Creating FTP data movement activity for Azure Data Factory Pipeline

Walkthrough

https://blogs.msdn.microsoft.com/cloud_solution_architect/2016/07/02/creating-ftp-data-movement-activity-for-azure-data-factory-pipeline/

Summary

Additional Resources

- Developer Reference : https://azure.microsoft.com/en-us/documentation/articles/data-factory-sdks/

- Samples : https://azure.microsoft.com/en-us/documentation/articles/data-factory-samples/

- Monitor and Manage Pipelines : https://azure.microsoft.com/en-us/documentation/articles/data-factory-monitor-manage-app/

- Pricing: https://azure.microsoft.com/en-us/pricing/details/data-factory/

Wednesday, February 03, 2016

“Microservices” – What,Why,How and Challenges

“Microservices” is the new hot thing and certainly at the peak of the hype cycle. This post is my summary after going through various articles, blogs, courses, videos , books and very brief hands on experience with Microservices. So let’s get started…

What are Microservices ?

“Microservices is an approach to developing a single application as a suite of small services, each running in its own process and communicating with lightweight mechanisms, often an HTTP resource API.”

(Martin Fowler : http://martinfowler.com/articles/microservices.html)

Microservices are simply an architecture style to design an application in a certain way. Some also refer to it as Fine-grained SOA or based on a subset of SOA Principles.Here are some of the characteristics/tenents of Microservices:

- Services as Loosely coupled Components.

- Autonomous Services which can be Scaled, Deployed and Updated independently.

- Uses HTTP or lightweight protocol.

- Technology, Language and Platform agnostic.

- Decentralized Data Management.

- Organized around business capabilities.

Why Microservices ?

Microservices is the the next evolution in Software Decomposition. It enables separation of concerns and reuse which are quite essential in today’s cloud oriented world. Here are my 3 reasons why you should choose a Microservices approach from a Technology point of view:

- Scalability (Scaling to first 10 Millions users)

- Faster delivery of features (State of art in Microservices)

- Better utilization of Infrastructure to reduce costs

Does this mean every application should use Microservices approach ? Not necessarily…

One argument is to start with a traditional monolith, and split the application into Microservices once the monolith becomes a problem. Although there are others who say not to start with monolith, since it is very hard to split a monolith.

I think Microservices approach has its own challenges and not every application needs to deal with it (at least initially). But every application should keep this decomposition mindset at the forefront of the architecture so that it’s easier to split in future if we need to.

Here is an great article explaining the four main benefits of Microservices from a Business point of view : Innovate or Die : The Rise of Microservices.

How to create Microservices ?

Here are some of the resources that can guide you in building Microservices:

Challenges

Distributed systems are inherently complex and Microservices approach takes this to the next level.

Here are some of the challenges that Microservices bring to the table:

- Organization

- Service Ownership (from dev to support).

- Service Contracts

- Health Metrics

- Handling Failures, Throttling and Backwards compatibility

-

- Discovery

- Service Registry

-

- Data Management

- Transactional integrity with Decentralized Data Stores.

-

- Deployment and Testing

- Building Continuous Delivery pipeline

-

- Monitoring

- Publishing Internal and External Metrics (Latency, Request Per second, Error rate etc..)

- Log Aggregation

- Correlating Requests

-

Hope this gives a good summary of the Microservices world as of today.

Enjoy Microservices…

Tuesday, January 26, 2016

Building Next Generation Web Apps…

Here are the slides from my internal talk presented at Neudesic Office Day:

https://speakerdeck.com/jomit/building-next-generation-web-apps

Some of the code for the talk can be found at:

https://github.com/jomit/Node-Cloud

https://github.com/jomit/Node-ES6-Trials

Resources:

- Tomorrow's World of Web Development : https://channel9.msdn.com/Events/FutureDecoded/Future-Decoded-2015-UK/19

- Created front end with ASP.net 5 on Service Fabric : https://azure.microsoft.com/en-us/documentation/articles/service-fabric-add-a-web-frontend/

- Introduction to NoSQL - Martin Fowler : https://www.youtube.com/watch?v=qI_g07C_Q5I

- Flipkart Lite Video : https://www.youtube.com/watch?v=MxTaDhwJDLg

s

Sunday, December 27, 2015

Node on Raspberry Pi 2

Installing Node on the Pi

- sudo apt-get update (Update the Operating System)

- curl -sL https://deb.nodesource.com/setup | sudo bash - (Setup node repo source)

- sudo apt-get install nodejs (Install node)

- node -v or npm –version (Verify node is installed correctly)

Create your first Node web app on the Pi

Create a new folder under /home/pi named “nodeapps” and create a new file “app.js” in this folder.- nano app.js

- Add the following code in file and save it:

var http = require('http');

var server = http.createServer(function (request, response) {

response.writeHead(200, {"Content-Type": "text/plain"});

response.end("Look ma I am running Node on my Raspberry Pi !\n");

});

server.listen(8080);

console.log("Server running at http://127.0.0.1:8080/"); - node app.js

- Visit “http://127.0.0.1:8080” or “http://localhost:8080” in the Pi browser to see the app working. If your Raspberry Pi is connected with your network you can also view the app using the Pi’s IP Address and the port 8080.

- Use Ctrl + C to terminate the node app.

If your terminate the node app incorrectly you might get the ‘EADDRINUSE’ error message next time cause the port 8080 will still be in use.

To resolve this you can either check for the listening event or kill all the node processes as answered here.

Enjoy JavaScripting on Raspberry Pi…

Sunday, November 29, 2015

ASP.net 5 (everything you should know so far…)

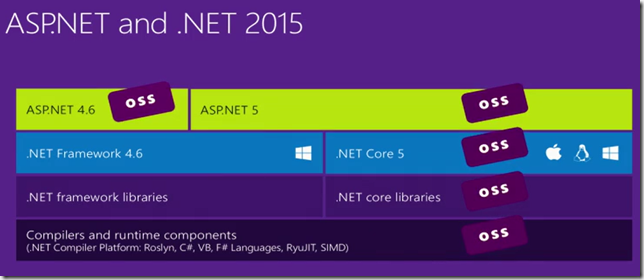

ASP.net 5 is the new (and significantly redesigned) open source and cross-platform framework for building .NET web applications which can run on both .NET Framework 4.6 and .NET Core.

Getting Started

- Install : https://get.asp.net/

- Learn : https://mva.microsoft.com/en-US/training-courses/introduction-to-asp-net-5-13786

- Docs : https://docs.asp.net/en/latest/

Tools and Terminologies

- DNX – Short for .NET Execution Environment. It is a SDK + Runtime environment to run .net applications for Windows, Mac and Linux.

- DNVM – .NET Version Manager

- DNX – .NET Execution Environment

- DNU – .NET Development Utilities

- OWIN – Open web interface for .NET

- WebListener – Self hosted windows-only web server for ASP.NET applications to be hosted outside of IIS.

- Kestrel – Cross platform web server for ASP.net 5 based on libuv (the async I/O library used by Node.js)

- Katana - OWIN implementations for Microsoft servers and frameworks.

- OmniSharp – Makes cross platform .NET development much easier.

- See Scott Hanselman’s post for more details on how it works.

- See Scott Hanselman’s post for more details on how it works.

- Yeoman – Scaffolding tool for modern web apps.

- Yeoman generator for ASP.net 5 apps

Additional Resources

- Deep Dive ASP.net 5 (Tech Days Sweden)

- Mastering ASP.NET 5 without growing a beard

- Building Web App with ASP.net 5, MVC 6, EF7 and AngularJS (pluralsight course by Shawn Wildermuth)

- Benchmarks for ASP.net 5

- How to Run ASP.net 5 on a Raspberry Pi 2

- ASP.net 5 and SPA (Microsoft.AspNet.NodeServices)

Enjoy modern web development….

.

.

.

Friday, November 06, 2015

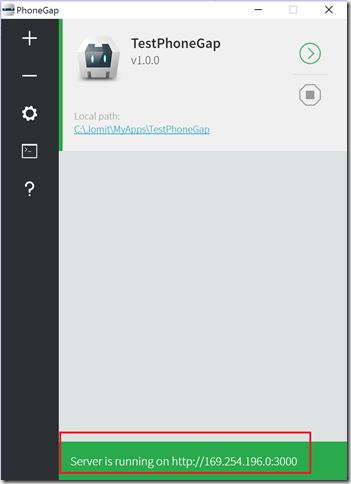

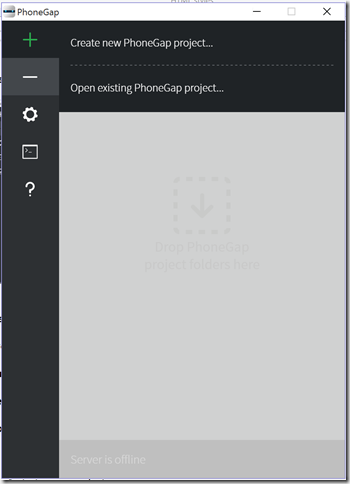

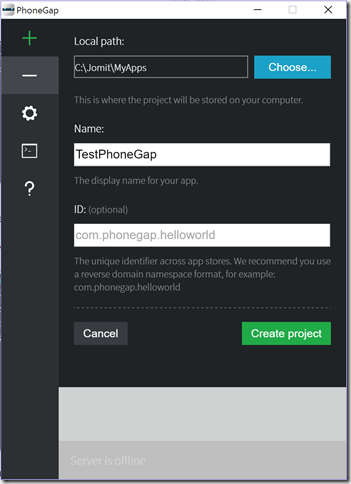

Trying out PhoneGap Desktop App on Windows 10 for Windows Phone Apps

PhoneGap Desktop app is basically a visual interface for developing phonegap apps easily. For this post I am using the the latest beta release 0.1.11 from github.

I followed the step-by-step instructions from here but it did not work for me. I had to do some additional steps and use the old PhoneGap CLI to make it work.

Here are the high level steps I performed to make it work on my Windows 10 machine

-

Install PhoneGap Desktop App (download beta release 0.1.11 from here)

-

Install Mobile App

-

Create New App using Desktop App

-

Run the App

-

Resolve the Issue

-

Disable Virtualbox or VMWare Network Adapters

-

Run CLI (You can install the CLI from here)

-

App Working

-

Connecting the Mobile App

-

Editing it in Visual Studio Code and Live Update

Enjoy JavaScripting on any device…

.

.

Wednesday, November 04, 2015

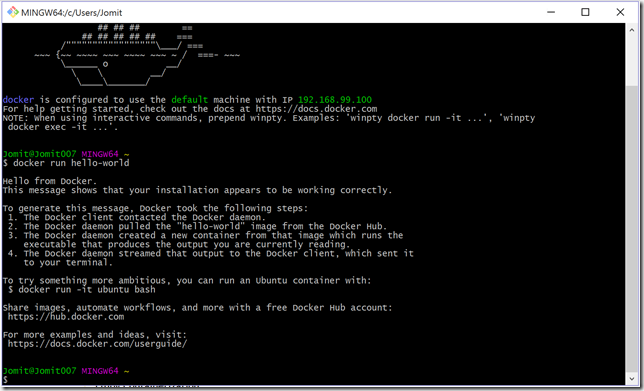

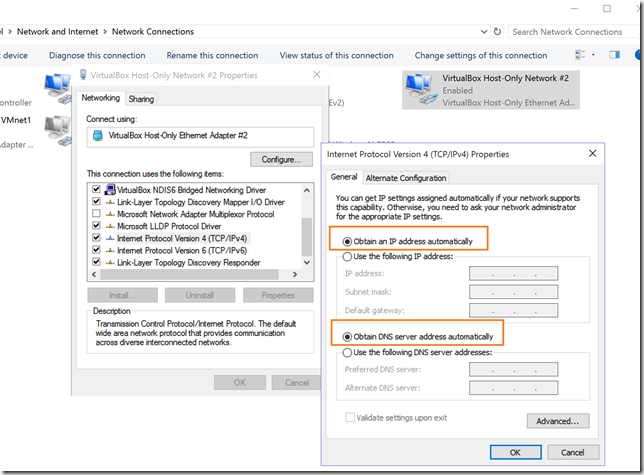

Trying out Docker Toolbox on Windows 10

Couple of months back docker team announced the new installer Docker Toolbox which replaced the Boot2Docker installer.

I successfully upgraded my machine to Windows 10 (after second attempt) and thought to try this out and so far I love how easy it is to install and get started.

Docker Toolbox includes the following Docker tools:

- Docker Machine for running the docker-machine binary

- Docker Engine for running the docker binary

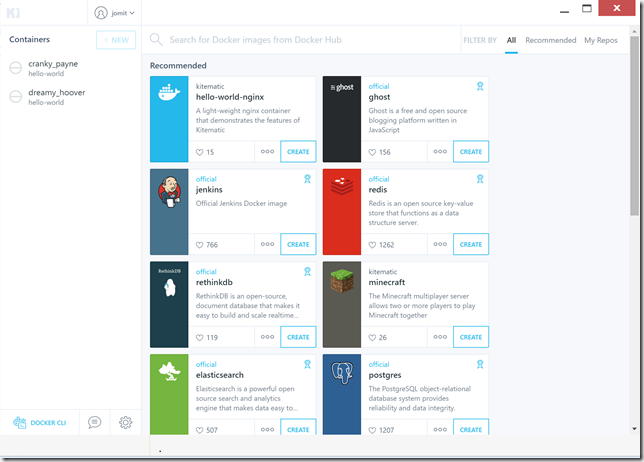

- Kitematic, the Docker GUI

- a shell preconfigured for a Docker command-line environment

- Oracle VM VirtualBox

(Note: If you already have Virtualbox installed make sure you have the IPv4 settings for the adapter set to obtain the IP address automatically.)

Docker Quickstart Terminal

Kitematic (Alpha)

You will need the docker hub account to signin. It allows to manage all your containers and add new ones from the hub.

Next step will be to add some Node goodness (Dockerizing a Node.js web app) and sprinkle the “Microservices” dust . . . ![]()

Enjoy Containerization . . .

.

.

.

.

Tuesday, November 03, 2015

Resources that helped me start learning Node.js

-

Pluralsight Course - “Node.js for .NET Developers”

-

MVA Course – “Building Apps with Node.js Jump Start”

-

node weekly to keep up with all the latest updates in node.js world

Enjoy JavaScripting !!!

.

.

Sunday, August 09, 2015

Useful resources to write better Angular Code and get ready for Angular 2.0

- Angular Style Guide By John Papa (Absolute must read for everyone before writing angular 1.0 code)

- Day 1 Keynote of ng-conf 2015 talking about the past and future of Angular

- Starting with Angular 2.0 : https://angular.io/

- Road to NG2 : http://devchat.tv/adventures-in-angular/048-aia-the-road-to-ng2

ECMAScript 6 is bringing lot of goodnes and the JavaScript in future might look a lot different than what we see today.

Frameworks like Angular, Backbone, Ember provide a nice wrappers to fill the gaps but ultimately we should keep our eye on ES6.

Here is how you can try ES6 today using compilers like babel and tracer today: http://jomit.blogspot.com/2015/02/trying-ecmascript-6-javascript-6.html

Enjoying Javascripting….

Wednesday, April 29, 2015

Build 2015–Day 1 Keynote (takeaways)

Azure

- Docker for Windows (docker client for windows)

- Mix and Match linux and windows containers and run it on any server.

- Debugging apps within containers in linux on windows server using Visual Studio.

- .Net Core RC (for linux, windows and mac)

- App Service

- Visual Studio (download)

- A free code editor for MAC, Linux and Windows

- SQL DB Elastic Pool

- For managing lots of databases in SaaS type scenarios

- SQL Data Warehouse

- Directly competes with AWS Redshift and its better.

- Data Lake

- Store and process infinite data.

Office

- Office Apps

- New Unified Graph API to access all the data from 1 place

- ‘Delve’ App

- ‘Sway’ App

Windows

- Windows 10 Universal apps

- Windows Store Apps now support (this is freaking awesome !!!!!)

- Web Sites/Web Apps

- .Net and Win32 Apps (using app virtualization)

- Android Java/ C++ Apps

- Object C Apps

- Compile Object C code using Visual Studio on Windows

- Hololens

- Microsoft Edge (final name for ‘'Project Spartan’ the new Browser on Windows 10)

Saturday, April 11, 2015

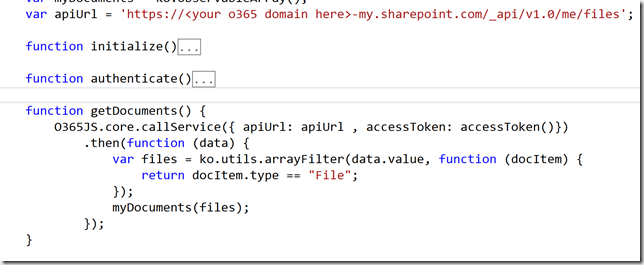

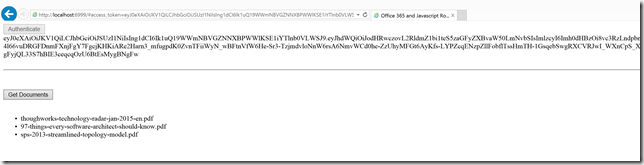

New App type, CORS support and Office 365 API's with vanilla-js

Few months back Microsoft introduced several new API’s for Office 365 for which spun across SharePoint, Exchange, Lync, and rather than having the developers learn each of the platform they simplified the general concepts and also introduced a new type of app - “Office 365 external Apps”

These apps look similar to “Provider Hosted Apps” but the have some key difference in the way you register them and launch them. For more details visit: http://www.sharepointnutsandbolts.com/2014/12/office-365-apps-and-sharepoint-apps-comparison.html

Along with this new type of Apps, Microsoft has also enabled cross-origin resource sharing (CORS) support for Office 365 API’s.

Which means we do not need any special client libraries to authenticate or access these API’s.

Let’s see how we can register this new type of app and then integrate it using vanilla-js.

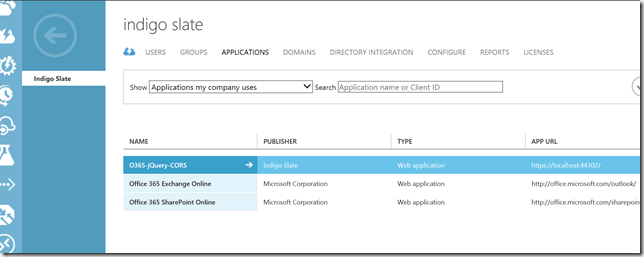

Step 1 – Register your App

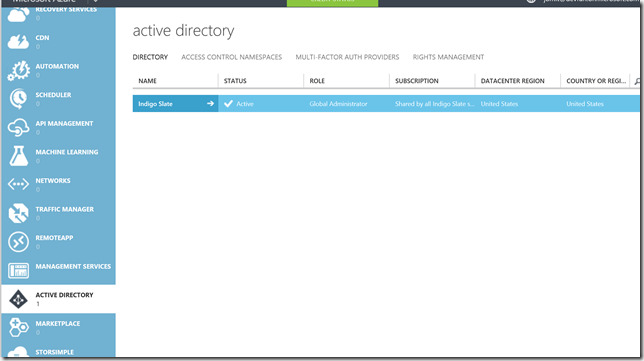

- Sign in to Azure Management Portal

- From Active Directory node select the Active Directory linked to your Office 365 subscription

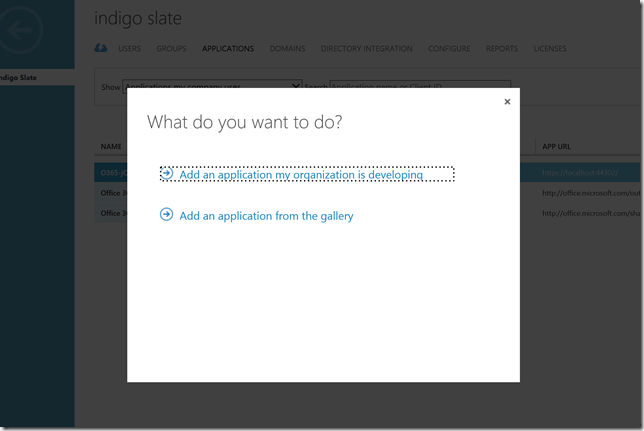

- Click “Applications” tab from the top navigation

- Click “Add” from the bottom of the screen and select “Add an application my organization is developing”

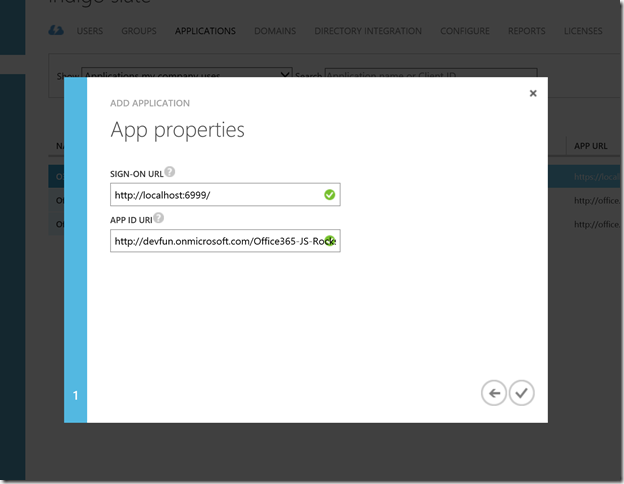

- Provide the app name and select Web application and/or web API as Type

- Provide the Sign On URL of your web app in which you are integrating the API’s

- Provide any unique ID of your App in App ID URI

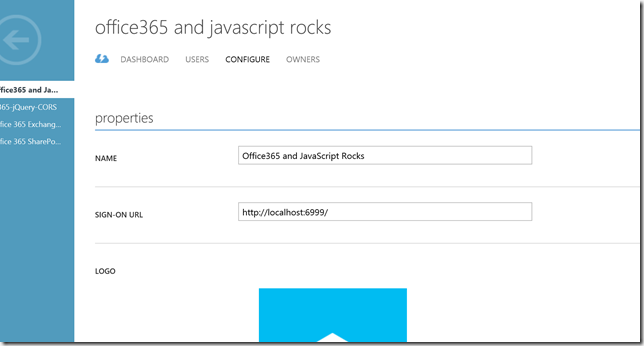

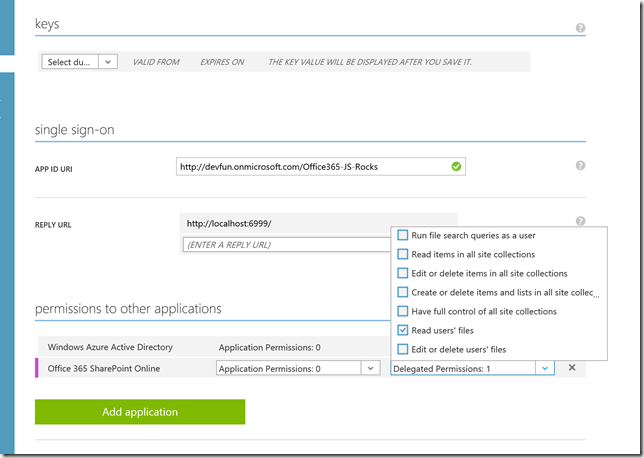

Step 2 – Configure permissions for App

- Open the application and click “Configure” tab from the navigation

- Scroll down and click “Add Application” under “permissions to other applications”

- Click “+” next to Office 365 SharePoint Online and save.

- For Office 365 SharePoint Online, open delegated permissions dropdown, select appropriate permissions

and save the application

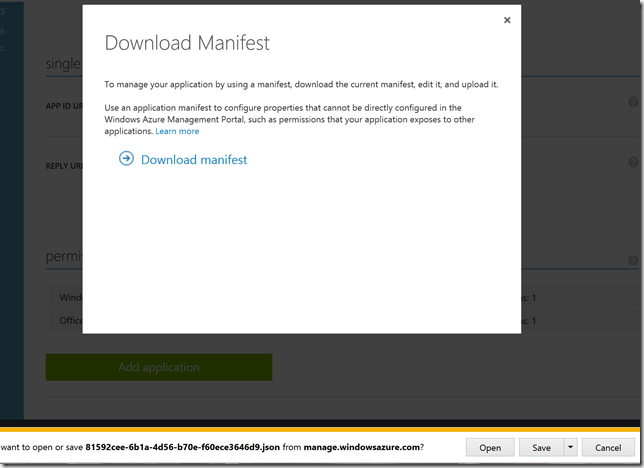

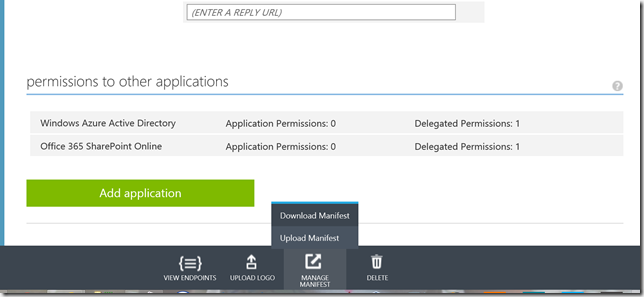

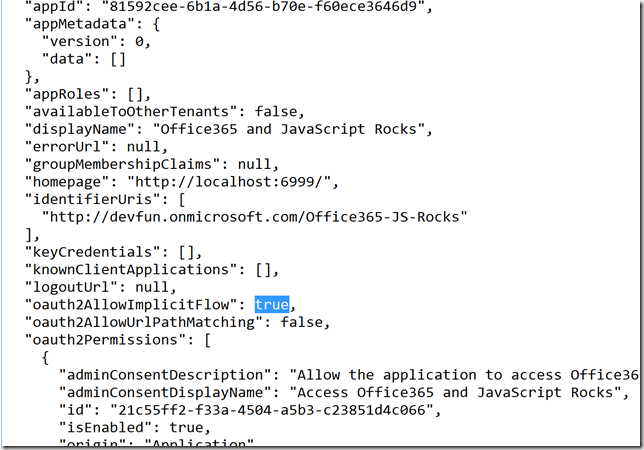

Step 3 – Configure the App to allow OAuth implicit grant flow

- On the configure tab, click on “Manage Manifest” button from the bottom and download the manifest

- Set the value of "oauth2AllowImplicitFlow" to true and upload the manifest file.

- The application registration is complete now…

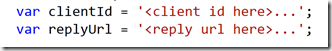

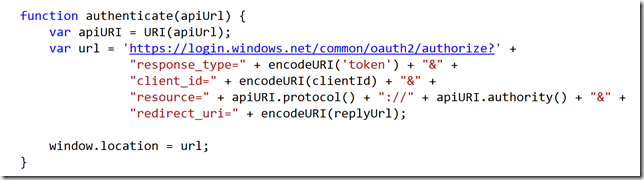

Step 4 – Integrate it with a Web Page

See complete code on github..

Other Resources:

- Setup Office 365 dev environment

- ADAL JS & CORS with Office 365 API’s

- Key skills for SharePoint/Office 365 developer

- .Net and JavaScript client libraries for Office 365 API’s

- Office 2013 modern authentication public preview

.

.

.

Tuesday, February 24, 2015

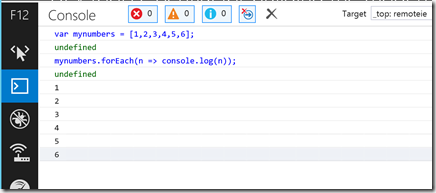

Trying ECMAScript 6 (JavaScript 6) Features

I have been working with JavaScript for over 5 years now. In the last 3 years, I have written more JavaScript code than C# code. And still there are parts of the language that amaze me. Who would have thought that 10 days of work could give rise to the assembly language of the web.

What is ECMAScript 6 ?

ECMAScript 6 is the upcoming version of the ECMAScript standard. This standard is targeting ratification in June 2015. ES6 is a significant update to the language, and the first update to the language since ES5 was standardized in 2009. See the draft ES6 standard for full specification of the ECMAScript 6 language.

See detailed list of all the features (along with examples) here : https://github.com/lukehoban/es6features

Which JavaScript Engines are implementing these features ?

Pretty much every browser vendor have started implementing some features, if not all.

Here is a live compatibility dashboard : http://kangax.github.io/compat-table/es6/

And as of today, IE is ahead of everyone in the ‘Desktop Browsers’ category.

How can I try some of these ES6 feature today ?

Here are your options:

- Within Browsers

- IE Technical Preview (using Azure RemoteApp)

- Firefox (30.0 or greater…)

- Chrome (40 or greater)

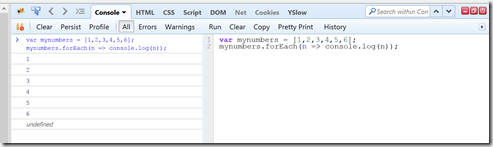

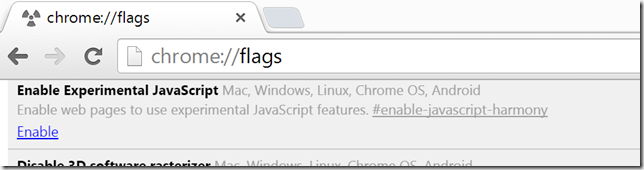

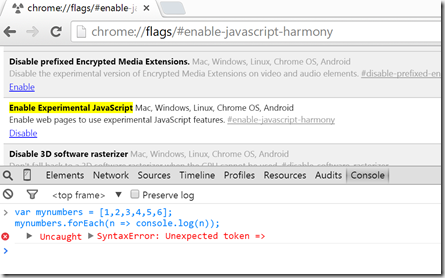

First enable the the new JavaScript features using chrome flags:

The Arrows feature is still not available in chrome but there might be other features that you can play around…

- Using Compilers/polyfills

- Traceur : https://github.com/google/traceur-compiler

- Babel : https://babeljs.io/docs/learn-es6/

Whether you like it or not, JavaScript is here to stay and will play a key role in pushing the web forward..

Thursday, February 19, 2015

Saturday, January 24, 2015

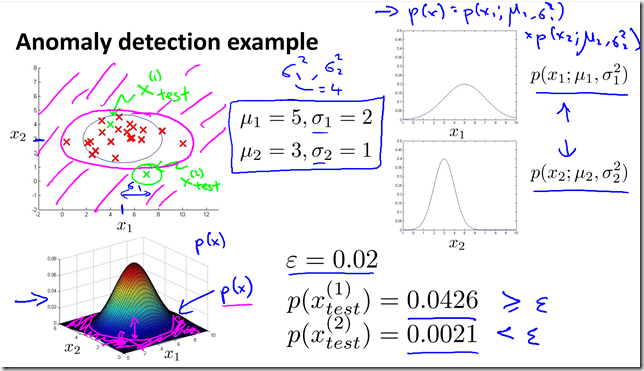

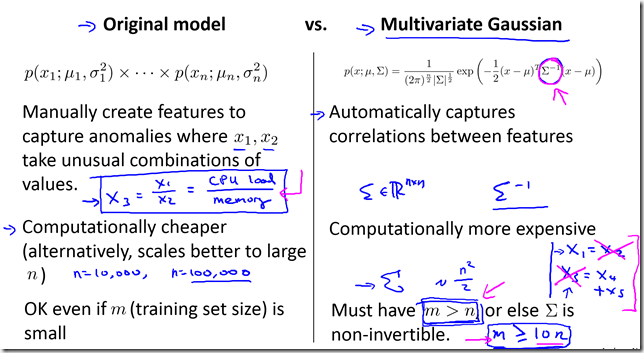

Machine Learning Course Summary (Part 6)

Summary from the Stanford's Machine learning class by Andrew Ng

- Part 1

- Supervised vs. Unsupervised learning, Linear Regression, Logistic Regression, Gradient Descent

- Part 2

- Regularization, Neural Networks

- Part 3

- Debugging and Diagnostic, Machine Learning System Design

- Part 4

- Support Vector Machine, Kernels

- Part 5

- K-means algorithm, Principal Component Analysis (PCA) algorithm

- Part 6

- Anomaly detection, Multivariate Gaussian distribution

- Part 7

- Recommender Systems, Collaborative filtering algorithm, Mean normalization

- Part 8

- Stochastic gradient descent, Mini batch gradient descent, Map-reduce and data parallelism

Anomaly detection

- Examples

- Fraud Detection

- x(i) = features of users i activities.

- Model p(x) from data

- Identify unusual users by checking which have p(x) < epsilon

- Manufacturing

- Monitoring computers in a data center

- Fraud Detection

- Algorithm

- Choose features x(i) that you think might be indicative of anomalous examples.

- Fit parameters u1,…un, sigma square 1,… sigma square n

- Given new example x, compute p(x):

- Anomaly if p(x) < epsilon

- Aircraft engines example

- 10000 good (normal) engines

- 20 flawed engines (anomalous)

- Alternative 1

- Training set: 6000 good engines

- CV: 2000 good engines (y=0), 10 anomalous (y=1)

- Test: 2000 good engines (y=0), 10 anomalous (y=1)

- Alternative 2:

- Training set: 6000 good engines

- CV: 4000 good engines (y=0), 10 anomalous (y=1)

- Test: 4000 good engines (y=0), 10 anomalous (y=1)

- Algorithm Evaluation

- Anomaly detection vs. Supervised learning

- Very small number of positive examples

- Large number of positive and negative examples.

- Large number of negative examples

- Enough positive examples for algorithm to get a sense of what positive examples are like, future positive examples likely to be similar to ones in training set.

- Many different “types” of anomalies. Hard for any algorithm to learn from positive examples what the anomalies look like; future anomalies may look nothing like any of the anomalous examples we’ve seen so far.

- Fraud detection

- Email spam classification

- Manufacturing (e.g. aircraft engines)

- Weather prediction (sunny/rainy/etc).

- Monitoring machines in a data center

- Cancer classification

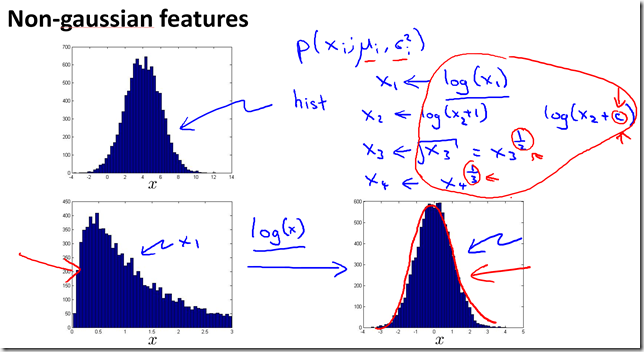

Anomaly detection

Supervised learning

- Choose what features to use

- Plot a histogram and see the data

- Original vs. Multivariate Gaussian model